Physics 252: Modern Physics! previous index next Physics 152: previous index next

Kinetic Theory of Gases: A Brief Review

Michael Fowler

Bernoulli's Picture

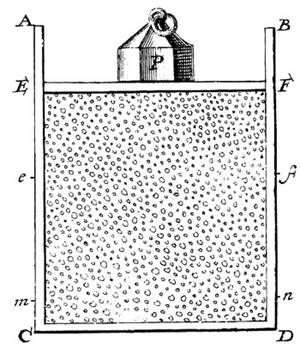

Daniel Bernoulli, in 1738, was the first to understand air pressure from a molecular point of view. He drew a picture of a vertical cylinder, closed at the bottom, with a piston at the top, the piston having a weight on it, both piston and weight being supported by the air pressure inside the cylinder.

He described what went on inside the cylinder as follows: “let the cavity contain very minute corpuscles, which are driven hither and thither with a very rapid motion; so that these corpuscles, when they strike against the piston and sustain it by their repeated impacts, form an elastic fluid which will expand of itself if the weight is removed or diminished…”

Sad to report, his insight, although essentially correct,

was not widely accepted. Most scientists believed that the molecules in a gas

stayed more or less in place, repelling each other from a distance, held

somehow in the ether.

The Link between Molecular Energy and Pressure

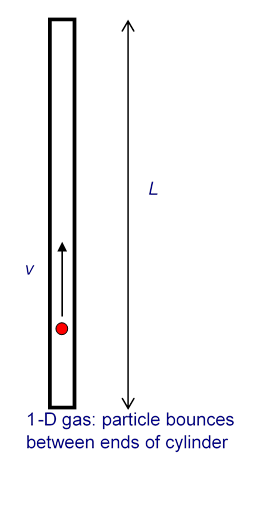

It is not difficult to extend Bernoulli’s picture to a quantitative description, relating the gas pressure to the molecular velocities. As a warm up exercise, let us consider a single perfectly elastic particle, of mass bouncing rapidly back and forth at speed inside a narrow cylinder of length with a piston at one end, so all motion is along the same line. (For the movie, click here!) What is the force on the piston?

Obviously, the piston doesn’t feel a smooth continuous force, but a series of equally spaced impacts. However, if the piston is much heavier than the particle, this will have the same effect as a smooth force over times long compared with the interval between impacts. So what is the value of the equivalent smooth force?

Using Newton’s law in the form force = rate of change of momentum, we see that the particle’s momentum changes by each time it hits the piston. The time between hits is so the frequency of hits is per second. This means that if there were no balancing force, by conservation of momentum the particle would cause the momentum of the piston to change by units in each second. This is the rate of change of momentum, and so must be equal to the balancing force, which is therefore

We now generalize to the case of many particles bouncing around inside a rectangular box, of length in the -direction (which is along an edge of the box). The total force on the side of area perpendicular to the -direction is just a sum of single particle terms, the relevant velocity being the component of the velocity in the -direction. The pressure is just the force per unit area, Of course, we don’t know what the velocities of the particles are in an actual gas, but it turns out that we don’t need the details. If we sum contributions, one from each particle in the box, each contribution proportional to for that particle, the sum just gives us times the average value of That is to say,

where there are particles in a box of volume Next we note that the particles are equally likely to be moving in any direction, so the average value of must be the same as that of or and since it follows that

This is a surprisingly simple result! The macroscopic pressure of a gas relates directly to the average kinetic energy per molecule.

Of course, in the above we have not thought about possible complications caused by interactions between particles, but in fact for gases like air at room temperature these interactions are very small. Furthermore, it is well established experimentally that most gases satisfy the Gas Law over a wide temperature range:

for n moles of gas, that is, with Avogadro’s number and the gas constant.

Introducing Boltzmann’s constant it is easy to check from our result for the pressure and the ideal gas law that the average molecular kinetic energy is proportional to the absolute temperature,

Boltzmann’s constant 1.38.10-23 joules/K.

Maxwell finds the Velocity Distribution

By the 1850’s, various difficulties with the existing

theories of heat, such as the caloric theory, caused some rethinking, and

people took another look at the kinetic theory of Bernoulli, but little real

progress was made until Maxwell attacked the problem in 1859. Maxwell worked with Bernoulli’s picture, that

the atoms or molecules in a gas were perfectly elastic particles, obeying

The relevant microscopic information is not knowledge of the position and velocity of every molecule at every instant of time, but just the distribution function, that is to say, what percentage of the molecules are in a certain part of the container, and what percentage have velocities within a certain range, at each instant of time. For a gas in thermal equilibrium, the distribution function is independent of time. Ignoring tiny corrections for gravity, the gas will be distributed uniformly in the container, so the only unknown is the velocity distribution function.

To see easily how random collisions can produce a well-defined velocity distribution, even if we start with all the molecules having the same speed, check out this applet!

Velocity Space

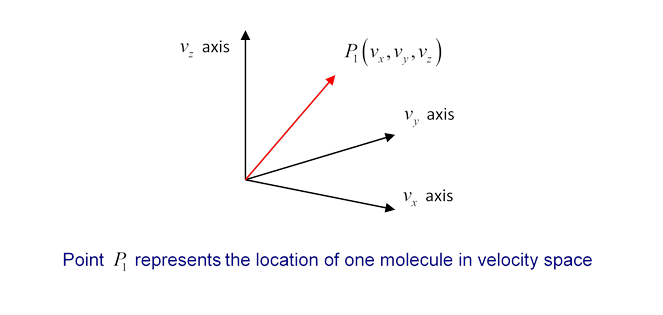

What does a velocity distribution function look like? Suppose at some instant in time one particular molecule has velocity We can record this information by constructing a three-dimensional velocity space, with axes and putting in a point P1 representing the molecule’s velocity (the red arrow is of course ):

Now imagine that at that instant we could measure the velocities of all the molecules in a container, and put points in the velocity space. Since is of order 1021 for 100 ccs of gas, this is not very practical! But we can imagine what the result would be: a cloud of points in velocity space, equally spread in all directions (there’s no reason molecules would prefer to be moving in the -direction, say, rather than the -direction) and thinning out on going away from the origin towards higher and higher velocities.

Now, if we could keep monitoring the situation as time passes individual points would move around, as molecules bounced off the walls, or each other, so you might think the cloud would shift around a bit. But there’s a vast number of molecules in any realistic macroscopic situation, and for any reasonably sized container it’s safe to assume that the number of molecules in any small region of velocity space remains pretty much constant. Obviously, this cannot be true for a region of velocity space so tiny that it only contains one or two molecules on average. But it can be shown statistically that if there are molecules in a particular small volume of velocity space, the fluctuation of the number with time is of order so a region containing a million molecules will vary in numbers by about one part in a thousand, a trillion molecule region by one part in a million. Since 100 ccs of air contains of order 1021 molecules, we can in practice divide the region of velocity space occupied by the gas into a billion cells, and still have variation in each cell of order one part in a million!

The bottom line is that for a macroscopic amount of gas, fluctuations in density, both in ordinary space and in velocity space, are for all practical purposes negligible, and we can take the gas to be smoothly distributed in both spaces.

Maxwell’s Symmetry Argument

Maxwell found the velocity distribution function for gas molecules in thermal equilibrium by the following elegant argument based on symmetry.

For a gas of particles, let the number of particles having velocity in the -direction between and be In other words, is the fraction of all the particles having -direction velocity lying in the interval between and (I’ve written instead of to help remember this function refers to only one component of the velocity vector.)

If we add the fractions for all possible values of the result must of course be 1:

But there’s nothing special about the -directionfor gas molecules in a container, at least away from the walls, all directions look the same, so the same function will give the probability distributions in the other directions too. It follows immediately that the probability for the velocity to lie between and , and and and must be:

Note that this distribution function, when integrated over all possible values of the three components of velocity, gives the total number of particles to be as it should (since integrating over each gives unity).

Next comes the clever partsince any direction is as good as any other direction, the distribution function must depend only on the total speed of the particle, not on the separate velocity components. Therefore, Maxwell argued, it must be that:

where is another unknown function. However, it is apparent that the product of the functions on the left is reflected in the sum of variables on the right. It will only come out that way if the variables appear in an exponent in the functions on the left. In fact, it is easy to check that this equation is solved by a function of the form:

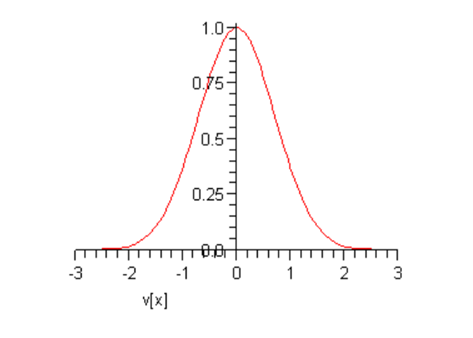

This curve is called a Gaussian: it’s centered at the origin, and falls off very rapidly as increases. Taking just to see the shape, we find:

At this point, and are arbitrary constantswe shall eventually find their values for an actual sample of gas at a given temperature. Notice that (following Maxwell) we have put a minus sign in the exponent because there must eventually be fewer and fewer particles on going to higher speeds, certainly not a diverging number.

Multiplying together the probability distributions for the three directions gives the distribution in terms of particle speed where Since all velocity directions are equally likely, it is clear that the natural distribution function is that giving the number of particles having speed between and

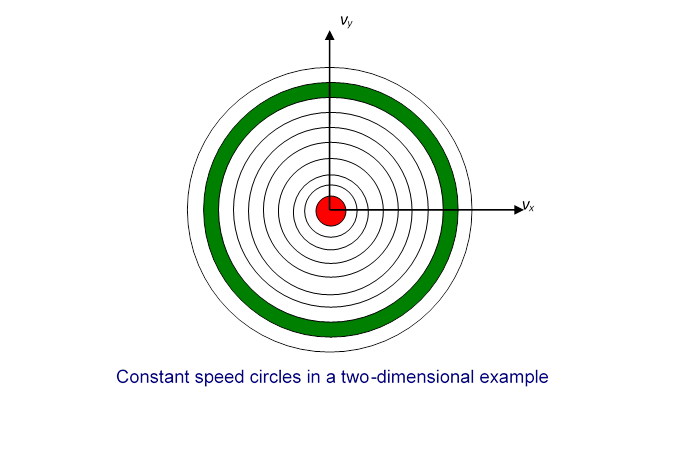

From the graph above, it is clear that the most likely value of is zero. If the gas molecules were restricted to one dimension, just moving back and forth on a line, then the most likely value of their speed would also be zero. However, for gas molecules free to move in two or three dimensions, the most likely value of the speed is not zero. It’s easiest to see this in a two-dimensional example. Suppose we plot the points P representing the velocities of molecules in a region near the origin, so the density of points doesn’t vary much over the extent of our plot (we’re staying near the top of the peak in the one-dimensional curve shown above).

Now divide the two-dimensional space into regions corresponding to equal increments in speed:

In the two-dimensional space, is a circle, so this division of the plane is into annular regions between circles whose successive radii are apart:

Each of these annular areas corresponds to the same speed increment In particular, the green area, between a circle of radius and one of radius corresponds to the same speed increment as the small red circle in the middle, which corresponds to speeds between 0 and Therefore, if the molecular speeds are pretty evenly distributed in this near-the-origin area of the plane, there will be a lot more molecules with speeds between and than between 0 and so the most likely speed will not be zero. To find out what it actually is, we have to put this area argument together with the Gaussian fall off in density on going far from the origin. We’ll discuss this shortly.

The same argument works in three dimensionsit’s just a little more difficult to visualize. Instead of concentric circles, we have concentric spheres. All points lying on a spherical surface centered at the origin correspond to the same speed.

Let us now figure out the distribution of particles as a function of speed. The distribution in the three-dimensional space is from Maxwell’s analysis

To translate this to the number of particles having speed between and we need to figure out how many of those little boxes there are corresponding to speeds between and In other words, what is the volume of velocity space between the two neighboring spheres, both centered at the origin, the inner one with radius the outer one infinitesimally bigger, with radius ? Since is so tiny, this volume is just the area of the sphere multiplied by that is,

Finally, then, the probability distribution as a function of speed is:

Of course, our job isn’t overwe still

have these two unknown constants A

and B. However, just as for the function is the fraction of the molecules corresponding to speeds between and , and all these fractions taken together

must add up to 1.

That is,

We need the standard result (a derivation can be found in my 152 Notes on Exponential Integrals), and find:

This means that there is really only one arbitrary variable left: if we can find this equation gives us that is, and is what appears in

Looking at we notice that is a measure of how far the distribution spreads from the origin: if is small, the distribution drops off more slowlythe average particle is more energetic. Recall now that the average kinetic energy of the particles is related to the temperature by This means that is related to the inverse temperature.

In fact, since is the fraction of particles in the interval at and those particles have kinetic energy we can use the probability distribution to find the average kinetic energy per particle:

To do this integral we need another standard result: . We find:

.Substituting the value for the average kinetic energy in terms of the temperature of the gas,

gives so

This means the distribution function

where is the kinetic energy of the molecule.

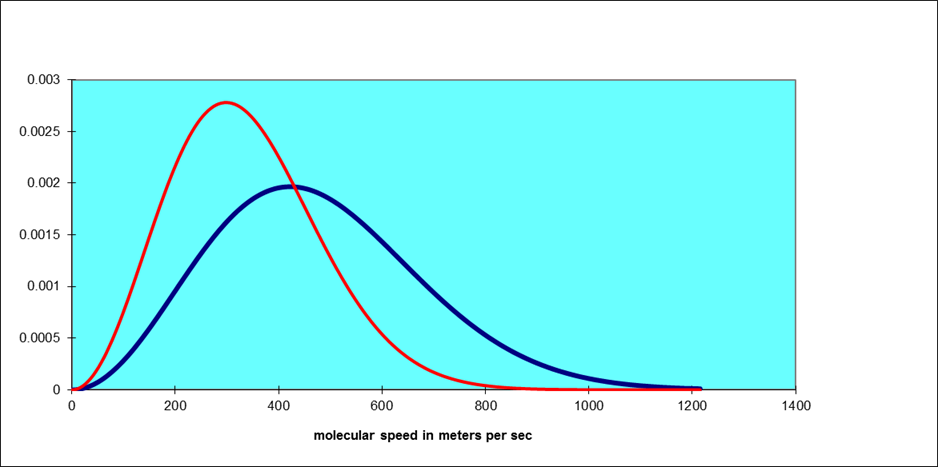

Note that this function increases parabolically from zero for low speeds, then curves round to reach a maximum and finally decreases exponentially. As the temperature increases, the position of the maximum shifts to the right. The total area under the curve is always one, by definition. For air molecules (say, nitrogen) at room temperature the curve is the blue one below. The red one is for an absolute temperature down by a factor of two:

What about Potential Energy?

Maxwell’s analysis solves the problem of finding the statistical velocity distribution of molecules of an ideal gas in a box at a definite temperature the relative probability of a molecule having velocity is proportional to The position distribution is taken to be uniform: the molecules are assumed to be equally likely to be anywhere in the box.

But how is this distribution affected if in fact there is some kind of potential pulling the molecules to one end of the box? In fact, we’ve already solved this problem, in the discussion earlier on the isothermal atmosphere. Consider a really big box, kilometers high, so air will be significantly denser towards the bottom. Assume the temperature is uniform throughout. We found under these conditions that with Boyles Law expressed in the form

the atmospheric density varied with height as

Now we know that Boyle’s Law is just the fixed temperature version of the Gas Law and the density

with the total number of molecules and the molecular mass,

Rearranging,

for moles of gas, each mole containing Avogadro’s number molecules.

Putting this together with the Gas Law,

so

where Boltzmann’s constant as discussed previously.

The dependence of gas density on height can therefore be written

The important point here is that is the potential energy of the molecule, and the distribution we have found is exactly parallel to Maxwell’s velocity distribution, the potential energy now playing the role that kinetic energy played in that case.

We’re now ready to put together Maxwell’s velocity distribution with this height distribution, to find out how the molecules are distributed in the atmosphere, both in velocity space and in ordinary space. In other words, in a six-dimensional space!

Our result is:

That is, the probability of a molecule having total energy is proportional to

This is the Boltzmann, or Maxwell-Boltzmann, distribution. It turns out to be correct for any type of potential energy, including that arising from forces between the molecules themselves.

Degrees of Freedom and Equipartition of Energy

By a “degree of freedom” we mean a way in which a molecule is free to move, and thus have energyin this case, just the and directions. Boltzmann reformulated Maxwell’s analysis in terms of degrees of freedom, stating that there was an average energy in each degree of freedom, to give total average kinetic energy so the specific heat per molecule is presumable and given that the specific heat per mole comes out at In fact, this is experimentally confirmed for monatomic gases. However, it is found that diatomic gases can have specific heats of and even This is not difficult to understandthese molecules have more degrees of freedom. A dumbbell molecule can rotate about two directions perpendicular to its axis. A diatomic molecule could also vibrate. Such a simple harmonic oscillator motion has both kinetic and potential energy, and it turns out to have total energy in thermal equilibrium. Thus, reasonable explanations for the specific heats of various gases can be concocted by assuming a contribution from each degree of freedom. But there are problems. Why shouldn’t the dumbbell rotate about its axis? Why do monatomic atoms not rotate at all? Even more ominously, the specific heat of hydrogen, at room temperature, drops to at lower temperatures. These problems were not resolved until the advent of quantum mechanics.

Brownian Motion

One of the most convincing demonstrations that gases really are made up of fast moving molecules is Brownian motion, the observed constant jiggling around of tiny particles, such as fragments of ash in smoke. This motion was first noticed by a Scottish botanist, who initially assumed he was looking at living creatures, but then found the same motion in what he knew to be particles of inorganic material. Einstein showed how to use Brownian motion to estimate the size of atoms. For the applet, click here!

Physics 252: Modern Physics! previous index next Physics 152: previous index next